One man has encountered an unusual problem with his Tesla: It keeps detecting humans that aren't there, right above tombstones in graveyards.

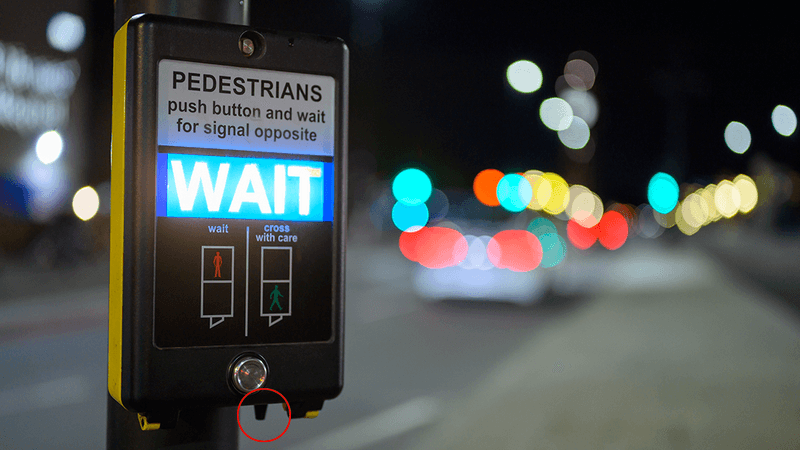

The rest of this article is behind a paywall. Please sign in or subscribe to access the full content.A video by @iam3dgar on TikTok shows him taking a slow drive through a graveyard, set to spooky music. As it goes on, he films the dashboard as it starts to display human figures on screen, apparently showing that there are human-shaped hazards lurking among the graves, which is creepy enough as it is.

But when the camera pans up, there are no humans in sight.

So, what's happening here?

Well, either the graveyard is haunted and Tesla can see in clairvoyant, or there's something going on with the sensor and/or hazard-detecting software. It's perfectly possible that the eight cameras or 12 ultrasonic sensors were merely faulty, causing the problem. Or the sensors are ok, meaning that the software is dealing with something it has detected in an unusual way.

The glitches are false positives: or detections of hazards that aren't there. It could be that it is picking up the flowers close to the camera and interpreting them as hazards further away. In terms of safety, it's better that automated vehicles return more false positives than false negatives. Think of whether it's better to falsely detect that a child has run into the road than to falsely not detect the same thing. Hence, when algorithms are tweaked, it's better to play it safe and have the software err on the side of returning more false positives than negatives.

However, that's not to say that false positives aren't a problem, too. Tesla have recalled cars in the past for being prone to "false-positive braking."

“False positives are really dangerous,” Ed Olson, the founder of the self-driving shuttle company May Mobility, told Wired. “A car that’s slamming on the brakes unexpectedly is likely to get into wrecks.”

And sometimes, just sometimes, it can convince you that you're detecting the rising of the dead.