Lab rats going missing might be causing havoc with biomedical research results on cancer and strokes, according to a new study.

The rest of this article is behind a paywall. Please sign in or subscribe to access the full content.Although much of the criticism against animal testing is concerned with the ethical considerations of animal welfare, this recent study attempted to show that the "animal model" of preclinical research is riddled with bias and validity problems.

The study, headed by Ulrich Dirnagl at Charité Universitätsmedizin in Berlin, was published this week in PLOS Biology. The research looked at animals going “missing” from many results in scientific papers and the effect this had on the validity of their results.

Many of these missing animals were either unreported deaths from unrelated illness, "Pinky and the Brain"-like getaway plots (kind of) or even the removal of certain animals if their behavior was too erratic. Essentially, the rodent test subjects being absent from results can be explained by either by death/chance, called “random loss,” or through the researchers' desire to fit the initial hypothesis by the removal of animals that could undermine the expected results, called “biased removal.”

They analyzed the effects of attrition – the gradual loss of animals – by simulating data of preclinical studies. Attempting to be representative of a real study, they used eight treated animals and eight untreated animals. They then simulated expected rates of “random loss” and “biased removal.”

“Random loss” decreased the validity of results by reducing the number of subjects the statistics can work with. This is particularly poignant with trials that use animals, as many countries have ethical guidelines or laws to force animal-testing science to use as few animals as possible.

In the most severe case, they also found that “biased removal” of animals can increase the true positive rate from 37 percent to about 80 percent in the last scenario. This essentially means the researchers might – consciously or unconsciously – remove animals from their test to effectively double how efficient a certain drug can appear to work.

Interestingly, and perhaps more definitively, the research then undertook a meta-analysis of over 235 preclinical experiments on cancer and strokes that involved laboratory rodents. They compared the reported numbers of animals between the methods and results section. They found over half the experiments had an “unclear” change in animal numbers while only a small proportion, as little as 1/15 in cancer and 13/38 in stroke experiments, explained the missing animals.

As the authors themselves admit, from the data and sample size it's hard to gather exactly how widespread “biased removal” is in preclinical studies. However, the paper concluded by a giving advice on how to achieve greater transparency within the scientific community. This could be achieved by the authors specifying the rate and causes of attrition, as well as considering it in their statistical analysis.

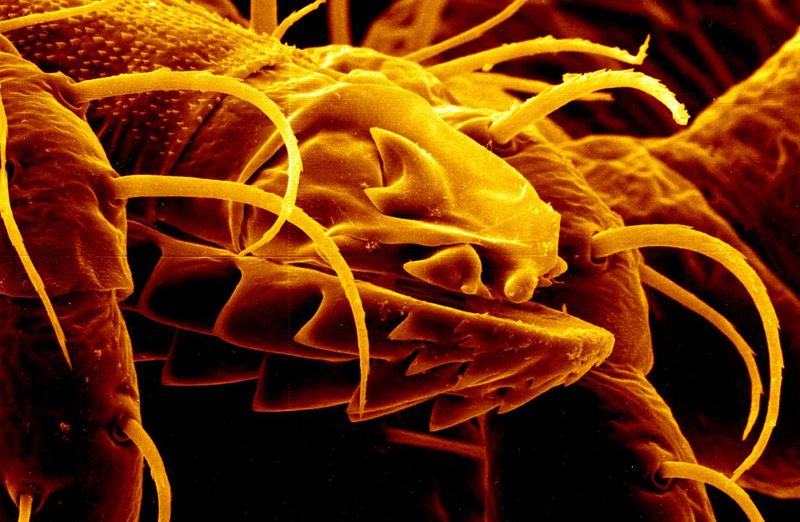

Main image credit: Rick Eh?/Flickr. (CC BY-NC-ND 2.0)