To hunt insects in total darkness, bats emit precisely timed acoustic signals and then quickly analyze the resulting echoes. Whales have biological sonar to help them navigate through murky waters. Using a virtual echo-acoustic space technique, researchers show that sonar-guided orientation can be successfully taught to people as well -- and that the skill isn’t just about supersensitive hearing. The findings were published in Royal Society Open Science this week.

The rest of this article is behind a paywall. Please sign in or subscribe to access the full content.There’s been rapidly increasing scientific interest in the ability of blind humans to use echo-acoustics to understand the space immediately surrounding them. Some listen for the echoes from self-generated sounds with a high degree of precision throughout their daily lives -- estimating distances, detecting obstacles, and discriminating between objects with different textures and of varying sizes. But so far, little is known about the sensory-motor interactions that underlie effective echo-acoustic orientation. Not to mention, people don’t have large, mobile ears like bats, Science explains, who can swivel their ears like radar dishes to detect echoes off of tiny, flying insects.

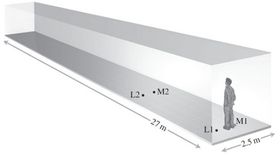

To see if people can be trained to do this in spite of our ears, Ludwig Wallmeier and Lutz Wiegrebe of Ludwig Maximilian University in Munich recruited eight subjects with normal vision to explore a long corridor of concrete walls and PVC flooring just by clicking their tongues. Most got “quite good” after two to three weeks of training, Wiegrebe tells Science, and could reliably orient themselves to walk down the corridor without running into any walls using just clicks and echoes.

The duo also designed a virtual version of the corridor -- the virtual echo-acoustic space (VEAS) -- to test how important head and body movements (rather than just hearing alone) are to their accuracy. To make sure their VEAS was realistic, the team enlisted the help of two blind professional echolocation teachers who had taught themselves to echolocate as children.

The blindfolded participants sat in a chair and their tongue clicks were picked up by a headset microphone and played back via headphones as if they were still in the corridor. The goal was still to line their bodies up with the center of the corridor, and they were tested in two ways. First, they used a joystick to rotate the virtual corridor without moving their bodies. Second, the corridor was fixed and they could swivel their chairs and move their heads.

When they weren’t allowed to move their bodies or heads, they teeter-tottered down the virtual corridor and couldn’t correct themselves before hitting a virtual wall. But when the corridor was stationary, and they were free to move their bodies and heads, Science reports, the blindfolded recruits were able to quickly right themselves.

The duo hopes that a virtual reality program like this one could help blind people learn to use echolocation.