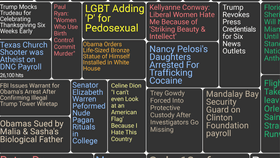

The spread of fabricated stories on social media has taken the world by surprise, and everyone from the social media giants to governments don't really know how to tackle it. There have been suggestions that the problem is so great it may have even swung the election in favor of Donald Trump by depressing Hillary Clinton's voter turnout on election day.

The rest of this article is behind a paywall. Please sign in or subscribe to access the full content.Fake news is a real problem. Which is why scientists are trying hard to understand the extent of fake news properly, as well as how and why lies spread so effectively online.

In 2017 a study on fake news went viral, being covered by many big sites and newspapers, which offered some clues.

The study, published in Nature and covered by everyone from Scientific American to Buzzfeed News, suggested that with an overload of false information out there competing for your attention on social media, people have difficulty separating what's real from what's fake. As a result of our limited attention spans and time we can spend assessing whether something is real or fake, low-quality information can spread relatively well compared to high-quality information.

"Quality is not a necessary ingredient for explaining popularity patterns in online social networks," the study authors wrote in their paper at the time. "Paradoxically, our behavioral mechanisms to cope with information overload may... [increase] the spread of misinformation [making] us vulnerable to manipulation."

One of the key (depressing) findings was that "quality and popularity of information are weakly correlated". Whether something is factual has very little to do with whether it's popular.

But it turns out there's a problem with the quality of information in the study.

Last week it was retracted by the authors after they discovered their findings were false. As reported by Retraction Watch, the authors spotted errors in their own data whilst attempting to replicate their figures, which led them to retract their study. Recalculating their figures, they found that a key claim was not supported.

"In the revised figure the distribution of high-quality meme popularity predicted by the model is substantially broader than that of low-quality memes, which do not become popular," they wrote in the retraction.

"Thus, the original conclusion, that the model predicts that low-quality information is just as likely to go viral as high-quality information, is not supported. All other results in the Letter remain valid."

The authors were not trying to mislead anybody, however, so this isn't a case of fake news. Just human error, followed by a correction.

“For me it’s very embarrassing," Filippo Menczer, one of the study's authors, told Rolling Stone. "But errors occur and of course when we find them we have to correct them.”